Do you know, at the program level, how many of your students rely on federal Direct Loans versus self-funding? If a specific degree program at your institution lost its students’ eligibility for those loans, do you know how that would affect enrollment in that program — and to your revenue?

These are the kinds of questions that the One Big Beautiful Bill Act (OBBBA) is now forcing university finance leaders to confront. My colleague, Scott Crist, outlined all three of the law’s major higher education provisions when it passed last summer.

Here, I focus on the provision our team has been discussing most with university finance leaders since then: the program-level earnings test. These are the questions that come up most frequently, along with how we’re thinking about them.

What does the earnings test require, and what are the consequences of failing it?

The OBBBA builds on the gainful employment rule, which previously applied only to certificates, and expands accountability to cover all college degrees.

The core question the test asks is straightforward: Are graduates financially better off having earned their degree than they would have been without it?

- For undergraduate programs, workers who completed their degree must have a median income within four years after graduation that exceeds those of high school diploma holders ages 25 to 34 in the same state.

- For graduate programs, median earnings must exceed those of bachelor’s degree holders, using the lowest of three benchmarks: statewide, field-specific within the state, or field-specific nationally. That “lowest of” rule is important, as the test uses whichever comparison is hardest to clear.

If a program fails in two of three consecutive years, its students lose eligibility for federal Direct Loans.

What’s at stake is Direct Loan eligibility specifically, not Pell grants and not all Title IV funding. And the financial repercussions apply at the program level; there is no blanket institutional penalty.

How urgent is this?

We know the first measurements begin in July 2027, and the test applies a four-year lookback. This means:

- The first undergraduate class under evaluation is from 2023 — two years before the law was even enacted.

- Institutions will be assessed on outcomes they had no known reason to be managing.

We also know the first sanctions will arrive in 2029.

There remain, however, several uncertainties for the Department of Education (DOE) to clarify in guidance. For example:

- The “year of determination” is undefined and left to DOE discretion.

- An appeals process is mandated, but its basis hasn’t been specified.

- The compliance mechanics could be relatively simple or quite involved.

In our view, this uncertainty is not a reason to wait. The data window is already open, and if you’re going to model your exposure, the time to do it is in the next few months or before your fall enrollment cycle opens — not after the rules are finalized.

Which programs should we be worried about?

All degree programs are covered by the test, and every institution should assess its full portfolio. That said, the conversations we’re having consistently point to graduate programs as the area of elevated risk.

The consensus is the value of an undergraduate degree relative to a high school diploma will clear the threshold in most cases. Graduate programs face a harder test, partly because of the “lowest of three benchmarks” rule and partly because of structural dynamics in certain labor markets.

Social work is the most visible example. You typically need a master’s degree to be hired in that field, but early-career earnings are modest — roughly $55,000 within four years working. Counseling graduates on licensure tracks earn supervision-level wages. Fine arts programs face similar headwinds nationally.

That said, for most institutions, the percentage of students in programs that actually fail the test may end up being small. But that doesn’t mean one can ignore it – it means you should know your specific exposure. A regional university in the Mountain West with a strong social work program or a teaching-focused college with a robust education school faces very different exposure than a large research university. That variation is precisely why modeling your specific portfolio matters.

These are structural earnings challenges, not program quality issues, but the test doesn’t distinguish between the two. It’s also important to recognize not all exposure is equal. A program 5% below the threshold is fundamentally different from one 30% below.

What does this mean for university finances?

This is where we see the biggest disconnect. Financial aid offices are beginning to track these requirements. Finance departments face a different problem: the earnings test sits at the intersection of three frequently siloed operations – institutional research (data), financial aid (compliance), and enrollment management (strategy). However, ownership is unclear, meaning, the tracking may be completed, but the revenue modeling is not. That’s a gap that creates institutional blind spots.

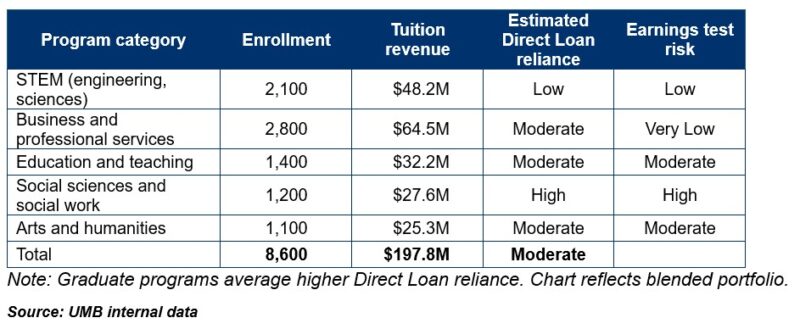

If students in a given program can’t access Direct Loans, some will choose a different institution. For tuition-dependent institutions, that enrollment impact flows straight to revenue. The key variable is how many students in each program are borrowing from the federal government. If most students in a certain program are self-funding, the practical financial impact of losing loan eligibility is limited. But where reliance on Direct Loans is high, the exposure can be significant. Understanding that program-by-program is the starting point of your evaluation.

The actual numbers: An illustrative case study

Here’s what a realistic stress test looks like for a mid-sized regional comprehensive university with approximately $200 million in annual operating revenue. This hypothetical institution has 8,600 students, a diverse mission (education, STEM, liberal arts, professional programs), and is tuition-dependent (~52% of revenue from net tuition).

Scenario: Two high-risk programs fail earnings test consecutively

For the sake of argument, let’s assume the social work master’s program (120 students, $3.8 million revenue, 94% Direct Loan reliance) and the Bachelor of Arts in fine arts (180 students, $4.1 million revenue, 71% Direct Loan reliance) both lose Direct Loan eligibility in year two of the measurement period (2028 or 2029 timeframe):

Year 1 impact loss of loan eligibility:

- Reduced operating fund for faculty/program maintenance: $3.5 million annualized

- Loss of fee-based ancillary revenue (parking, housing, dining margins on affected cohorts): $0.8 million

- Potential grant overhead pressure if research labs tied to affected programs face staffing pressure: $0.4–$0.8 million over time

- Total institutional pressure: $14.7–$15.1 million over 24 months

Financial stress indicators:

- Operating margin impact: 8% swing (from ~3% to a near-breakeven or slight deficit scenario)

- Full-time equivalent (FTE) faculty reduction pressure: ~22–28 FTE

- Debt service coverage ratio: potential declines from 1.35x to 1.20x if not mitigated (varies by existing debt structure)

- Moody’s/S&P monitoring: likely triggers analytical review for downgrade risk depending on existing rating and reserve levels

What mitigates the damage

- Advance modeling: Had the institution identified these programs in 2026–2027, they likely would have had time to:

- Build affordability into the programs. A lower price point tends to mean wider Direct Loan spread and lower overall risk to individual programs

- Explore alternative funding models or restructure (stackable credentials, income-share agreements, employer partnerships, etc.)

- Make strategic decisions about program viability before the market decides for them

- Enrollment diversification—institutions that don’t have concentrated Direct Loan reliance in one or two programs are better insulated. Meaning, if this scenario were spread across six programs instead of the two shown above, the revenue pressure would drop to $5–$6 million, and margins would remain defensible.

- Reserve strength: An institution with 90+ days of operating reserves can absorb this shock without cascading cuts. Below 60 days, the pressure becomes acute within two fiscal years.

To be clear, individual exposure will be different – perhaps smaller if the institution is more diverse, larger if it’s more concentrated in affected fields. The data above simply suggests what is possible at an institution with this profile. But how do you know unless you model it and assess the risk?

What should we be doing right now?

The immediate decision isn’t whether to restructure programs. It’s about understanding your exposure. Start by identifying which programs are most likely to fall below the threshold and by how much.

- Cross-reference available earnings data—College Scorecard, Bureau of Labor Statistics, your own institutional research—against the applicable state and field-level benchmarks. The complexity here is matching program codes across datasets, deciding whether to use state-specific or national benchmarks (a choice that could significantly affect your earnings threshold), and understanding what “median earnings” actually captures when your graduates include part-time completers and non-finishers. This work typically requires coordination across three or more departments.

- Determine where Direct Loan reliance is concentrated.

- Make sure the people who need to be in this conversation—finance, financial aid, institutional research, academic leadership—are communicating and collaborating.

Institutions whose programs clear the threshold comfortably have a story to tell. At a time when public conversation about the value of higher education is intensifying, demonstrating strong graduate earnings outcomes is a genuine recruiting and marketing asset.

Much about the earnings test is still evolving. But the implementation timeline is not. The institutions with options are the ones that know which programs carry significant risk (how many students, how much revenue could be affected, and which enrollment scenarios trigger institutional stress). That modeling requires integrating data sources most institutions haven’t connected before, running stress tests across enrollment sensitivities, and translating compliance risk into financial planning language.

The technical work should start now, not in 2027 when the first measurements begin. We’re having these conversations now and welcome the opportunity to assist in how your portfolio looks under several earnings test scenarios.

Learn more about how UMB Bank, n.a. Public Finance can support your organization’s financing and capital needs, or contact us to be connected with a public finance specialist.

Disclosure

This communication is provided for informational purposes only. UMB Bank, n.a. and UMB Financial Corporation are not liable for any errors, omissions, or misstatements. This is not an offer or solicitation for the purchase or sale of any financial instrument, nor a solicitation to participate in any trading strategy, nor an official confirmation of any transaction. The information is believed to be reliable, but we do not warrant its completeness or accuracy. Past performance is no indication of future results. The numbers cited are for illustrative purposes only. UMB Financial Corporation, its affiliates, and its employees are not in the business of providing tax or legal advice. Any materials or tax‐related statements are not intended or written to be used, and cannot be used or relied upon, by any such taxpayer for the purpose of avoiding tax penalties. Any such taxpayer should seek advice based on the taxpayer’s particular circumstances from an independent tax advisor. The opinions expressed herein are those of the author and do not necessarily represent the opinions of UMB Bank or UMB Financial Corporation.

Products, Services and Securities offered through UMB Bank, n.a. Capital Markets Division and UMB Financial Services, Inc. are: NOT FDIC INSURED | MAY LOSE VALUE | NOT BANK GUARANTEED